Shan Yang

Researcher · Multi-modal Reasoning

I work on multi-modal reasoning: making vision-language models reason about physics, geometry, and the world they actually see. My focus is on the unsexy half of the stack — audited training data, hard evaluation benchmarks, and RL recipes that survive the jump from text-only chain-of-thought to multi-modal.

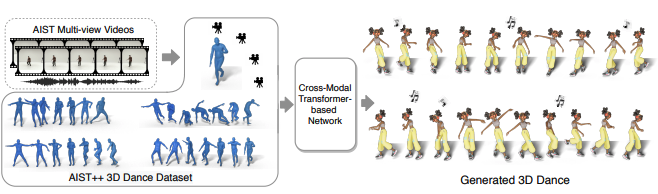

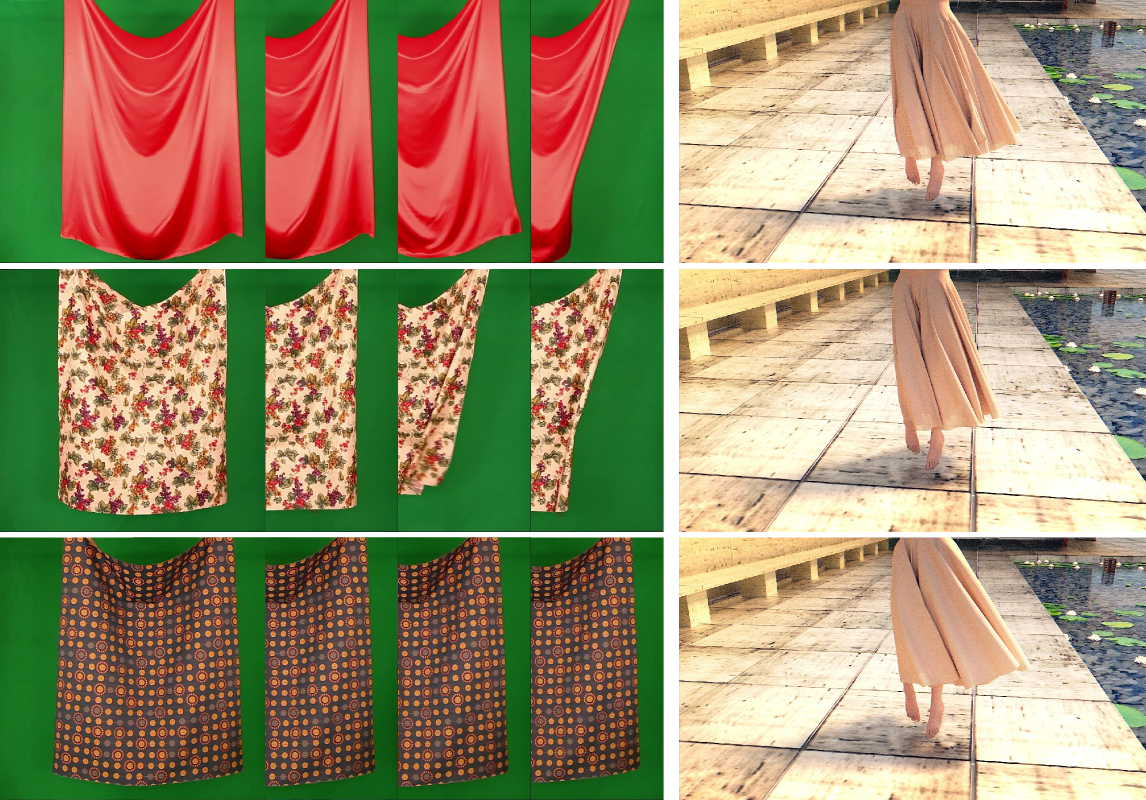

Currently Staff Applied Scientist at Adobe Foundry (Adobe's foundation-model research org), post-training foundation models with SFT, DPO, and RL. Previously Tech Lead at Amazon (GenAI Live Action Studio, Amazon Video Search) and Senior Research SDE at Google Research (multi-modal modeling, AIST++). PhD from UNC-Chapel Hill with Prof. Ming C. Lin on learning physical parameters from video.

Currently exploring: multi-modal GRPO and chain-of-thought recipes for VLMs (Physics-o1, in preparation).

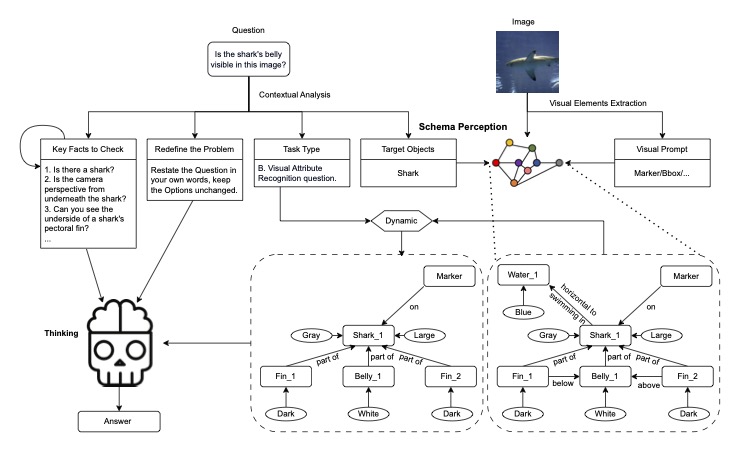

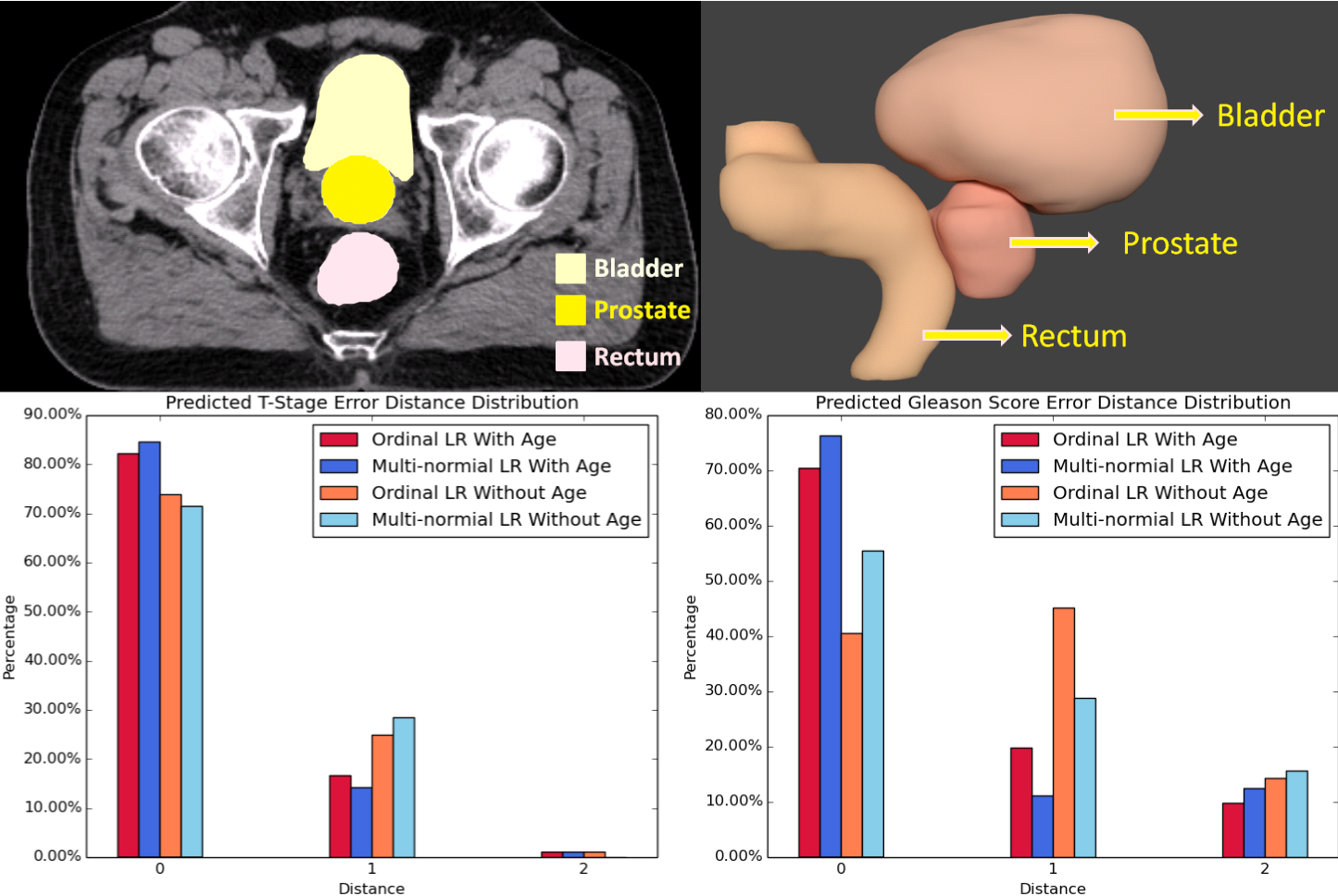

Physics-R1: An Audited Olympiad Corpus and Recipe for Visual Physics Reasoning

Visual physics reasoning for vision-language models: a 2,434-record audited training corpus, a 500-question novel-source olympiad benchmark (PhysOlym-A), and an RL recipe that pushes SOTA on visual physics reasoning at the 7B scale. All artifacts open.

Selected Publications

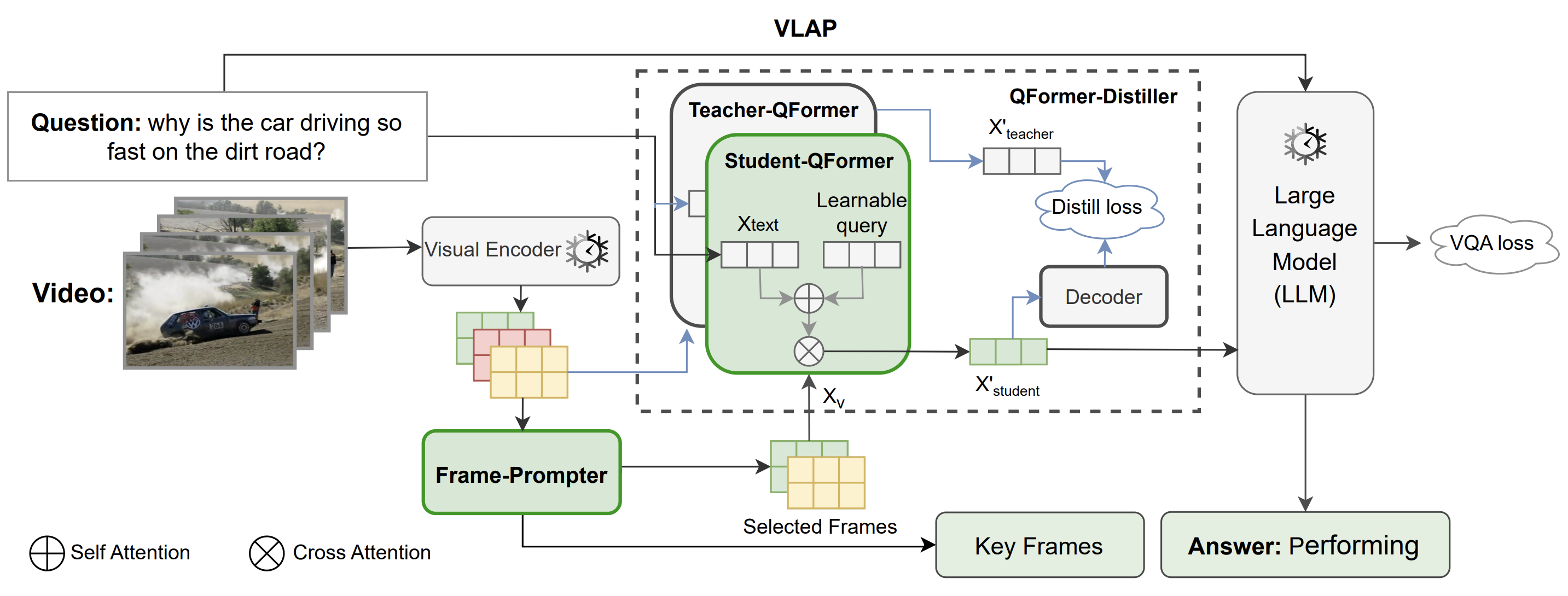

VLAP: Efficient Video-Language Alignment via Frame Prompting and Distilling for Video Question Answering

ECCV 2024

Show all publications

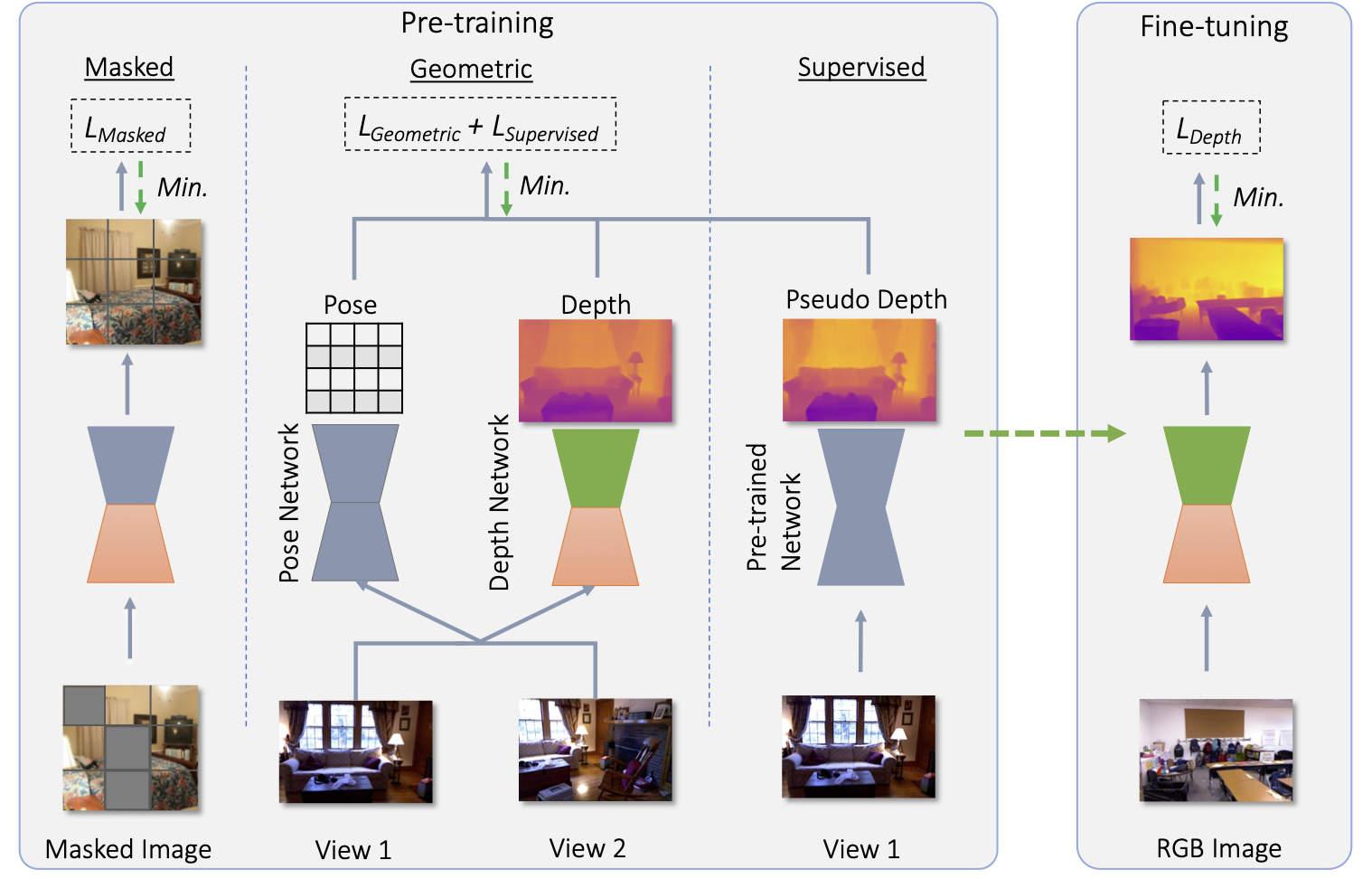

MeSa: Masked, Geometric, and Supervised Pre-training for Monocular Depth Estimation

NeurIPS 2023 Workshop SSLTheoryPractice

Optical Mouse: 3D Mouse Pose From Single-View Video

CVPR 2021 (CV4Animal Workshop)

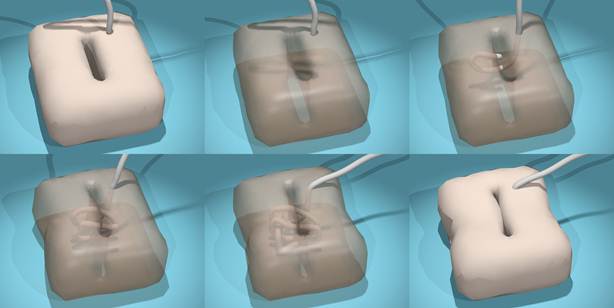

Real-time Simulation for Buried Suture

CARS 2012

Open Source & Releases

2,434-record audited training corpus for visual physics reasoning, with provenance and license audit.

500-question novel-source olympiad benchmark for evaluating visual physics reasoning in VLMs.

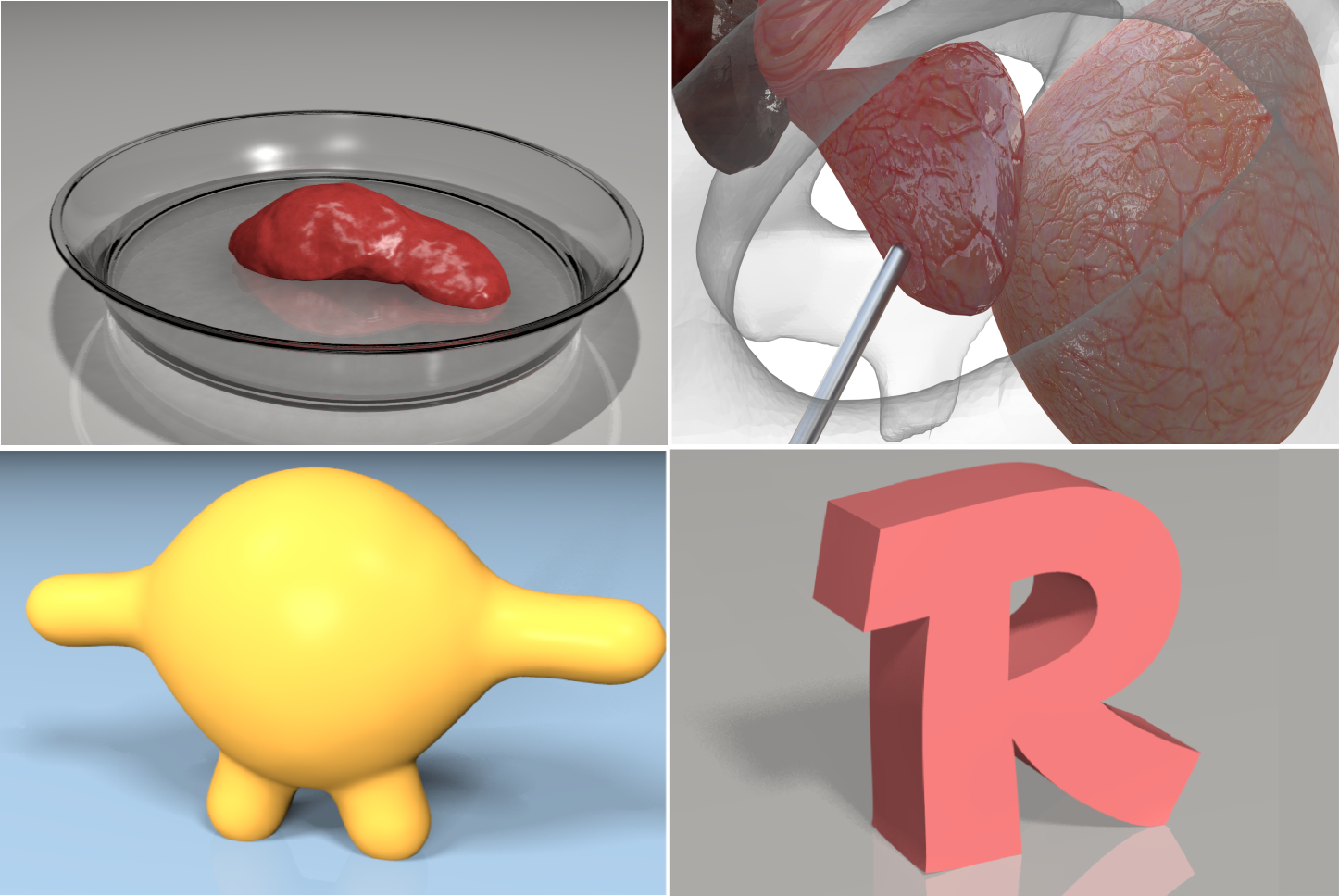

11.6K episodes of physics-grounded video annotations for world-model training.

Loaders and tooling for the AIST++ 3D dance dataset (from AI Choreographer, ICCV 2021).

AI-powered research project manager with pixel-art agent teams (Scout, Theorist, Architect, Coder).

Personal log of learning reinforcement learning — notes, experiments, and insights.